Redis for Product Scaling

Overcome Database Bottlenecks and Latency

550+ Engagements Since 2006 — Trusted By

Most application performance problems trace back to one source: the primary database handling traffic it was never designed for. Read queries that run on every page load. Session lookups that hit the database on every authenticated request. Inventory checks during a flash sale that produce race conditions because nothing is serialising concurrent writes.

CUSTOMER STORIES

Client Results and Success

OUR SERVICES

Our Redis Services for Product Scaling

Caching Strategies

Real-Time Messaging

Session Management

WHAT WE DO

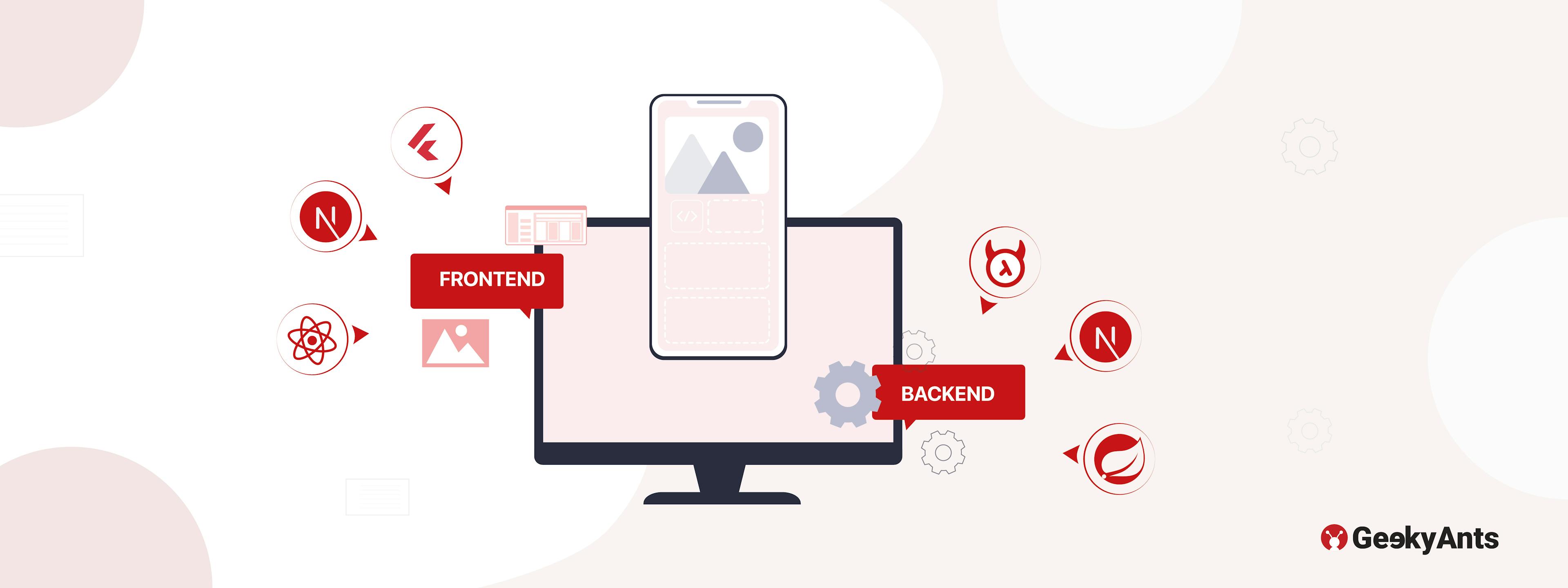

Complete Backend Engineering Services for Enterprises and Companies

Product Backend Studio

Enterprise Backend

Cloud & Platform Engineering

Data & Infrastructure Engineering

OUR RANGE OF IMPACT

Industries-Based Redis Services

THE GEEKYANTS DIFFERENCE

Redis for Product Scaling by Engineers Who Have Delivered 1000+ Projects

FEATURED CONTENT

Our Latest Thinking in Backend Engineering

Nov 28, 2024

Revolutionizing Home Waxing: An Application Tailored for Convenience and Expertise

Revolutionize home waxing with GeekyAnts' app! Enjoy salon-grade products, expert tutorials, community support, and rewards—making grooming seamless, professional, and empowering.

Jul 19, 2024

The Importance of BFF in Frontend Development

BFF (Backend for Frontend) enhances frontend performance by optimizing data retrieval and formatting. It simplifies development, reduces browser load, and improves user experience.

Jan 10, 2023

New Tech Stacks Added To Our Profile In 2022, And Plans For 2023

Here's a look at the new technologies GeekyAnts added to its front-end and back-end stacks in 2022 and plans to add in 2023.

Sep 3, 2021

A Comprehensive Guide To Backend Tech

An introduction to the Backend, its significance to Fullstack development and much much more...

Nov 30, 2020

Accessing MongoDB Purely via Dart

Performing basic CRUD operations on your MongoDB database locally using the 'mongo_dart' package.

Aug 31, 2020

LiveView With Phoenix

My experience with Phoenix and the wonderful world of backend development.

Build with us.Accelerate your Growth.

Customized solutions and strategiesFaster-than-market project deliveryEnd-to-end digital transformation services

Trusted By

Book a Discovery Call

Build with us.Accelerate your Growth.

- Customized solutions and strategies

- Faster-than-market project delivery

- End-to-end digital transformation services

Trusted By

What You Need to Know