May 26, 2023

Interface with AI in React Native

This article covers Ekaansh Arora's (Developer Advocate, Agora) talk on "Interface with AI in React Native," which he presented at the React Native Meetup held at GeekyAnts.

Author

Book a call

Table of Contents

Why Discuss Interface with AI in React Native?

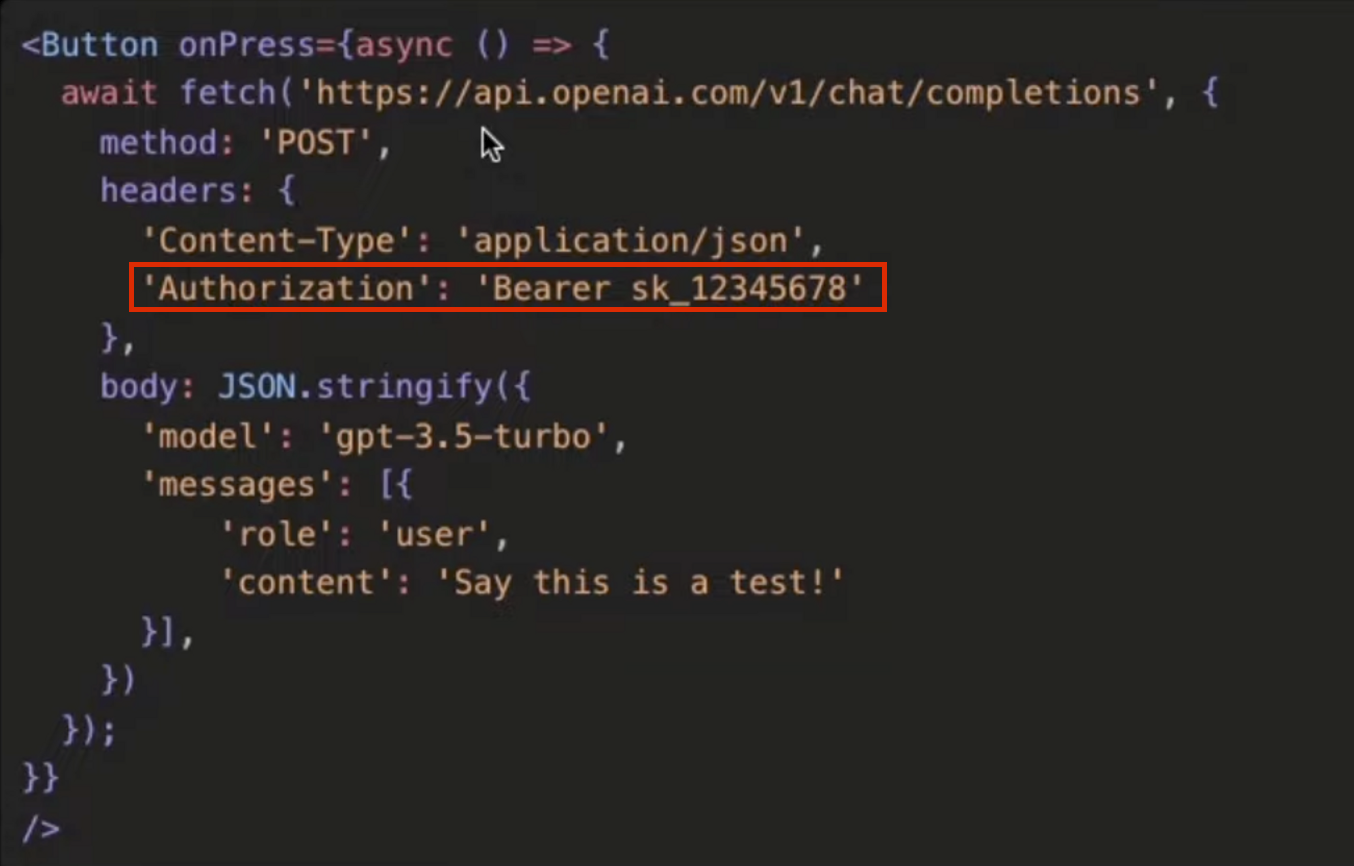

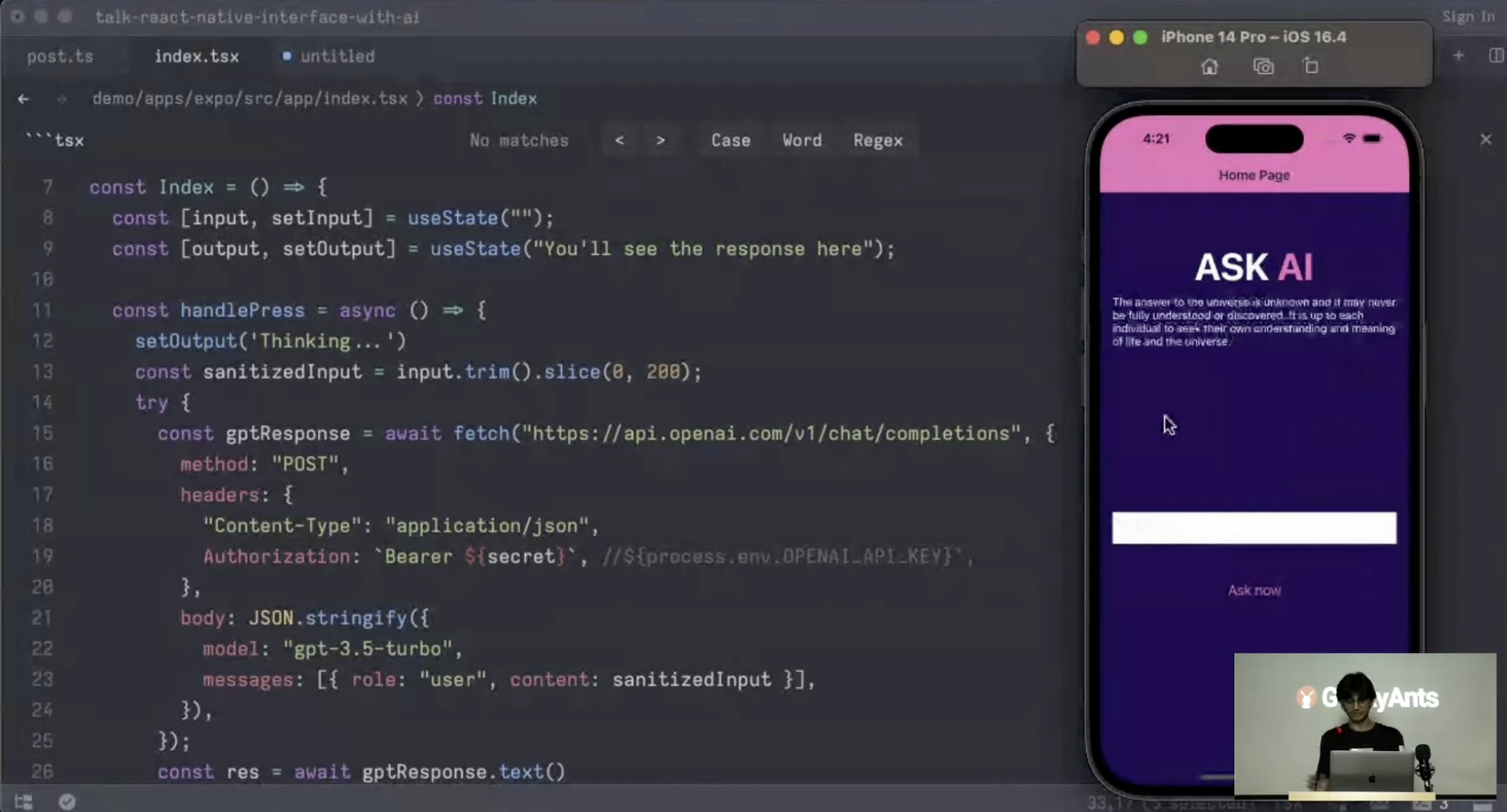

Let us start with an example. While building an AI app in React Native, you have a button where you can add an event called onPress and call your API in the event. At first glance, this seems fairly correct. But there is a key problem — you are exposing your API key in your application bundle

People would like to be quick to assume that they can just like put it in my environment variables, and that is fine. Well, not really.

The problem is fairly common. Check out this tweet by Cyrill Zakka, MD. The thread talks about how almost half the apps on the App Store are in some way leaking their API credentials.

Even if you compile down your code and store it in your info.p list, you can still decompile your source code and access the API string.

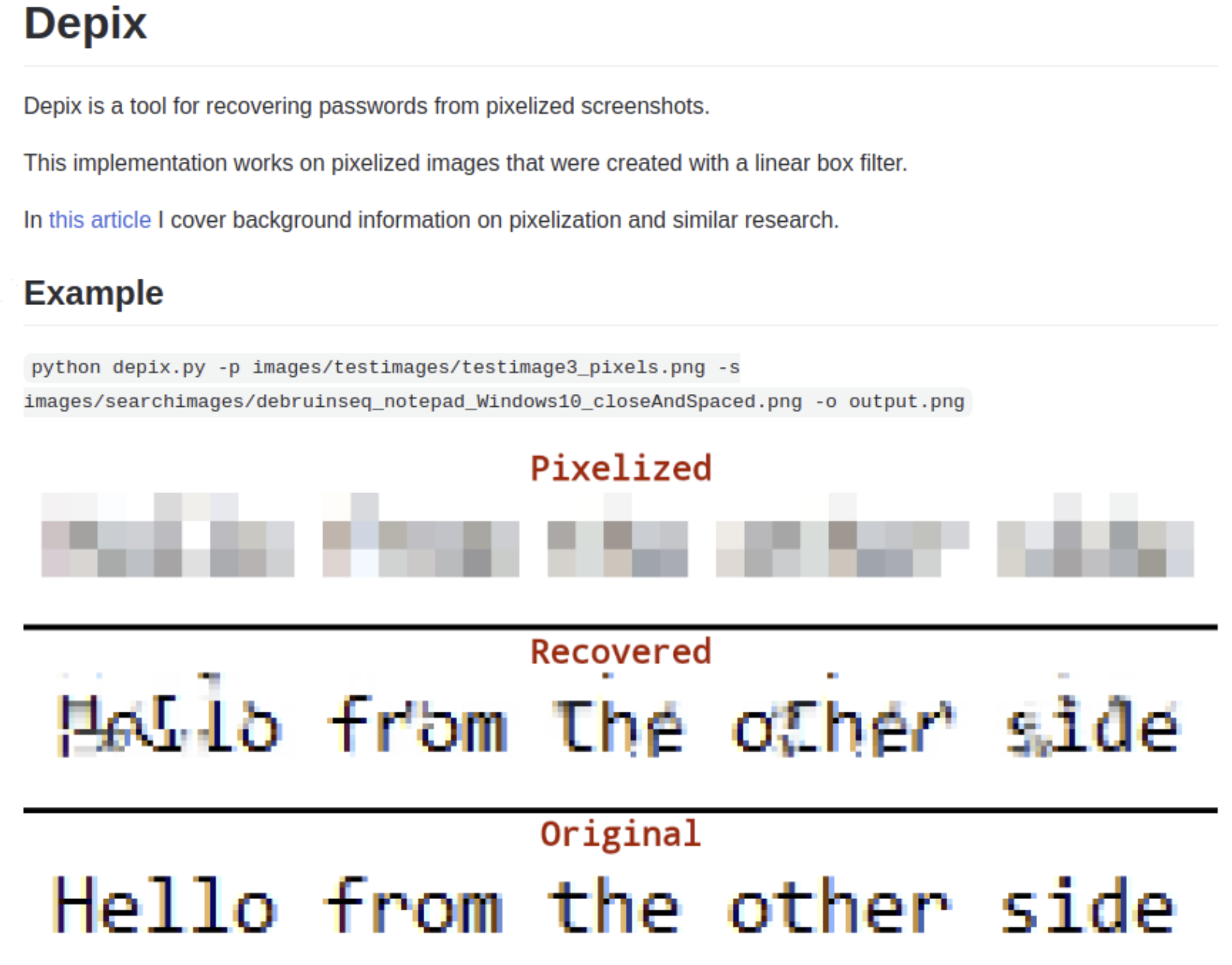

But what if you encode it and store it in a different format? Well, that does not seem to be a solution — you can always do a string search no matter what you're doing and compromise the security. Even if you go through security documentation on Expo and use some libraries, it is likely to be futile. Pixellating sensitive data is also not a solution. There are multiple ways to get the data.

This is a problem that exists, as “anything of value that can be hacked will be hacked” — it is only a matter of time and motivation. So if there is something in your bundle that you are giving the user access to, it is likely to be hacked.

The Solution — Not Put Anything There

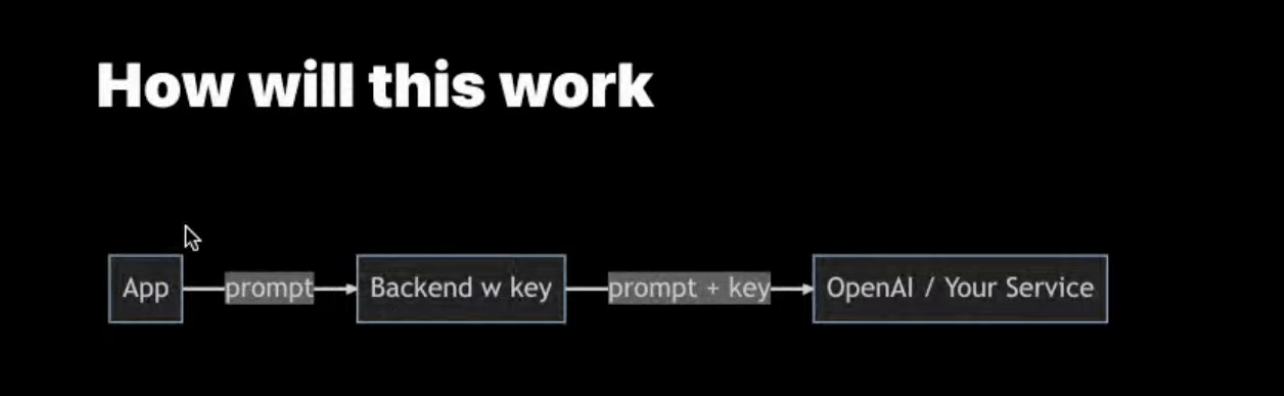

How can you steal what isn’t there? That is the overarching idea for the solution we are going to implement. So, it is time for the B-word — backend.

We will use TypeScript, Create T3 Turbo, Expo, and tRPC.

The solution is dropping a backend in the middle, which has your key and sort of signs the request. So your app only gives the prompt to the backend, it adds the key and sends it across to whatever service that you want. In this way, you sort of remove the attack vector from the app to the back end. That's fair, but your app source code is accessible, or at least your compiled app is accessible to millions of users. If you do things right, your backend shouldn't be accessible to anyone. So this is a much more secure process of doing things.

So as an example. When we create an application with AI using React Native, with the concepts we discussed, there is still an option to use the same concepts. There is some button that has some function and does accept requests. Then we're setting some state to show this on the screen. That's fairly standard React native stuff.

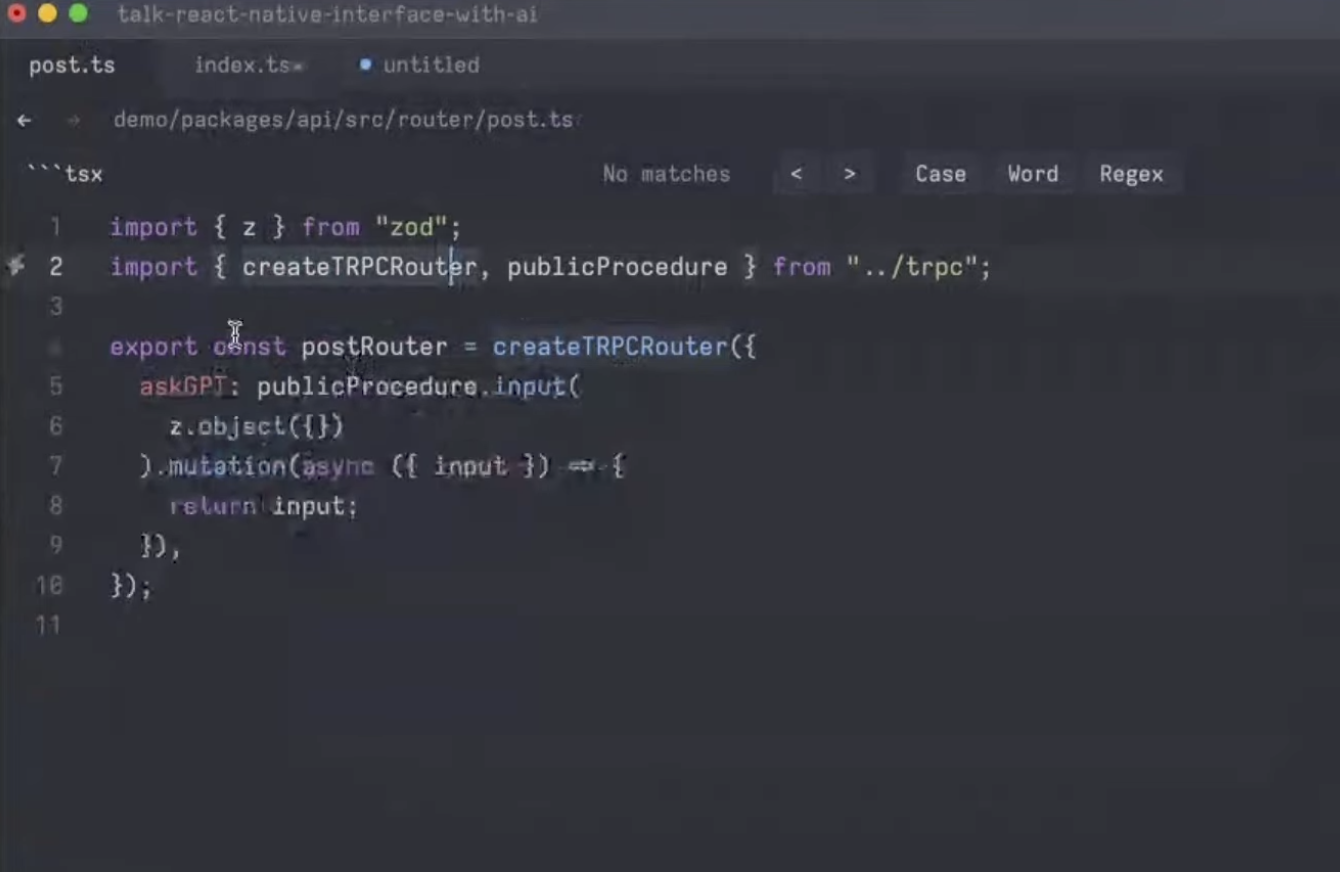

Now the idea is once you've set up these apps, there is something called a tRPC router. You can have keys in the router. These are like functions. So it's like defining a function that exists in your backend and calling a function that exists in the front end.

So there is a sort of API that's sort of abstracted away for you. And what we can do is, We can describe what input this function takes. So let's say this will accept something called a prompt and it'll be off a type string. And instead of just returning that input, what it will do is sort of take a code from the front end and drop it in the back end. We can trim the code and slice it so nothing bad can happen. Once that is done, we ensure that the code can return some response that front end can consume.

Accessing The Function on the Front End

You have something called API that you get from this library. It gives you this function called AskGPT. And this function sort of has something called a useMutation. So this is just a React query. The other processes are standard procedures.

That's how simple it is to write the backends. It's just defining functions that you can access on the front end. You don't have to do Kubernetes-like scale.

Deployment

So coming to Kubernetes and deployment. Since it's all serverless, there are no long servers. You can execute one command and have a backend deployed, nothing to worry about. And it's highly scalable. It is virtually limitless scale right from starting need.

The Future — What More We Can Do

An idea is to move your backend to the edge, which is something you can do fairly easily. And this becomes even faster and cheaper because there's no data access and you can call things. So this is both faster and since edge functions are incredibly cheap, you can get 500,000 execution units per month for free without having to pay for anything, which is great. If you're using something like Solito and NativeBase there are more advantages.

There are many possibilities. We just have to make cool things and inspire cooler things.

Check-out the full address by Ekaansh Arora here.

Subscribe to Our Newsletter

Subscribe to RSS

Press & Media Hub RSS FeedRelated Articles.

More from the engineering frontline.

Dive deep into our research and insights on design, development, and the impact of various trends to businesses.

Jun 5, 2026

Neobank vs Modernized Banking App Development: Which Path Delivers better ROI

Explore whether neobank development or banking app modernization delivers stronger AI ROI for U.S. banking products, with insights on compliance, cost, and scalabili

Jun 4, 2026

Beyond Virtual Consultations: Building Production-Ready AI Telehealth Products for Monitoring, Triage, and Patient Engagement

A decision framework for healthcare enterprises and healthtech startups building production-ready AI telehealth platforms, covering architecture, triage, engagement, integrations, and compliance in one guide.

Jun 1, 2026

How to Integrate RAG into Your Existing Application: Architecture, Tools and Cost Breakdown

This provides a technical and financial blueprint for retrofitting Zero-Copy RAG architecture into your existing enterprise stack to achieve ROI and production-grade reliability.

May 28, 2026

Why Your First AI Pilot Needs Success Metrics Before Development Begins

95% of AI pilots deliver zero measurable profit impact. Learn the critical importance of establishing concrete success metrics and operational constraints before writing any code to ensure your project scales.

May 27, 2026

Building Production-Ready AI Portfolio Management Platforms for Wealth Firms

This guide walks platform leaders through production architecture, real-time data pipelines, legacy system integration, regulatory compliance, and the build-buy-modernize decision framework for deploying an enterprise-grade AI portfolio management platform.

May 26, 2026

Building an AI Fintech Robo-Advisor Platform: Architecture, Compliance, and Key Features

A technical guide for CTOs and engineering leaders on building a compliant, production-grade AI robo-advisory platform for the US market, covering architecture, compliance, and cost.