Table of Contents

Things Developers Need to Know About DevOps

Author

Date

Book a call

Introduction

This article breaks down the talk by Robin Biju Thomas, Technical Architect at IoTIFY.io, during the DevOps Meetup held at GeekyAnts.

Let's get a brief overview of DevOps and related terminology that can improve workflows in our day-to-day jobs.

As developers, we may work on frontend, backend, full-stack, or DevOps roles. While working on a product, we handle the design, development, deployment, and debugging of various features, which require a certain degree of visibility and interaction with other developers and DevOps professionals.

Although our common objective is to efficiently release features, there may be gaps in an organization that we can better bridge by cultivating cross-domain knowledge.

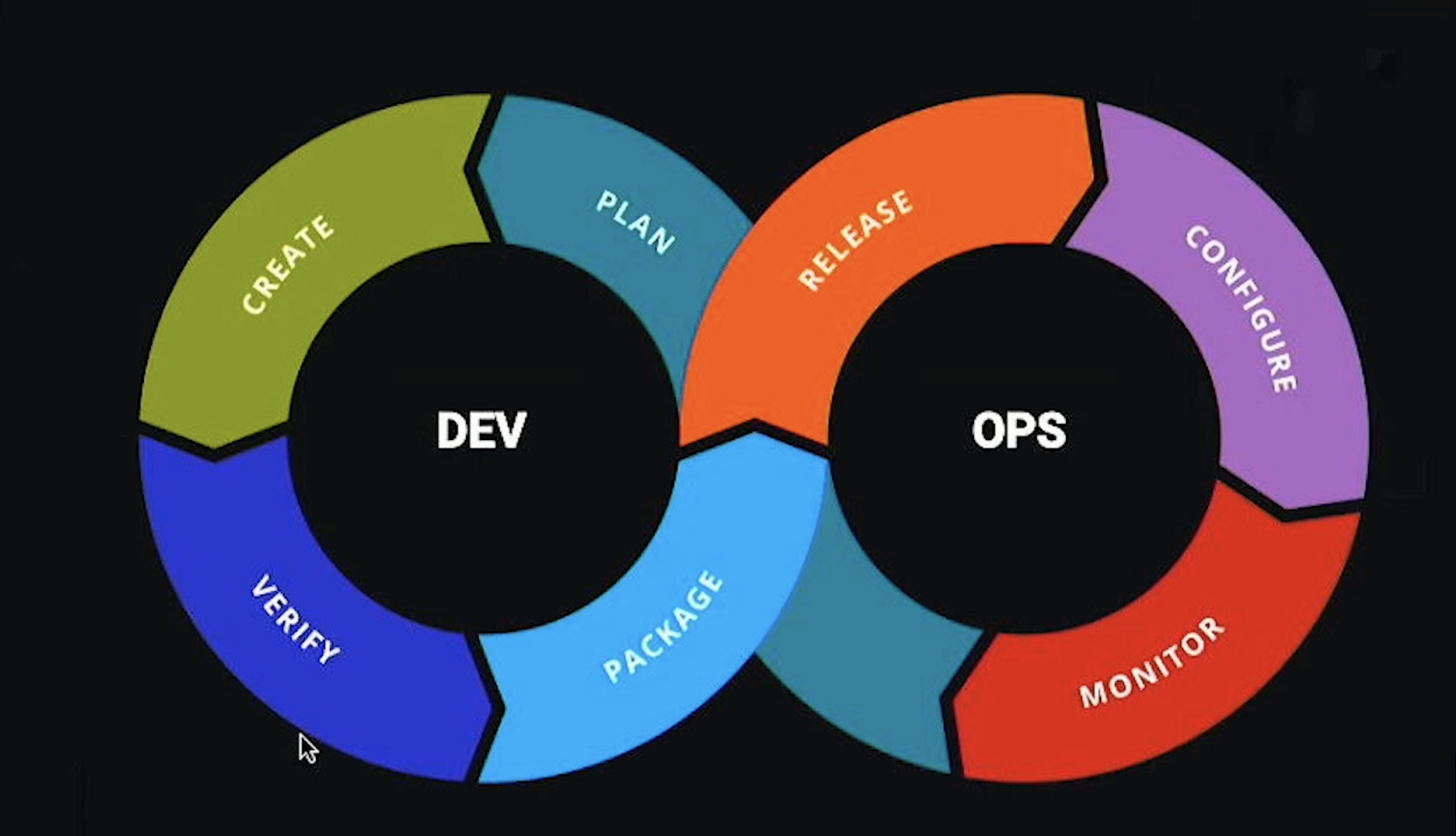

This is the standard diagram of the DevOps cycle. When one thinks of DevOps, this is immediately what comes to mind: the create, plan, release, monitor, configure, package, and verify stages of the standard DevOps cycle.

Developers approach this cycle from the create standpoint, with the priority of agilely getting the feature out the door. Otherwise, their managers start screaming at them. From the operational point of view, the DevOps team is the first to try and put out any fires, such as when a test fails or some part of the deployment requires extra troubleshooting or stability.

The goal of DevOps engineers is to facilitate the work of developers by making the release cycle easier. This can include platform engineering, SRE, core DevOps, and more. The job of a DevOps person is to make the developer's job easier. However, there can be conflicts when developers request a separate environment for each project, and the budget may not allow for it. Priorities can differ.

Let’s Address the Problem At Hand

The skill sets required for DevOps and developers are quite diverse. DevOps professionals spend a considerable amount of time in YAML or Python for automation. Their expertise is centered on root cause analysis, finding what is breaking, ensuring stability, anticipating issues that can happen in production, prioritizing, reducing risk, and creating rollback plans.

In contrast, developers prioritize Agile development and use cutting-edge technology. They are focused on finding the best and fastest way to solve problems. Their skill sets, priorities, and perspectives differ from those of DevOps professionals.

DevOps professionals operate within the context of security. They focus on concerns such as network ports, incoming connections, and exposing multiple ports or channels within the server. They are responsible for ensuring the infrastructure remains secure and stable.

The meme shown above is a well-known reference that arises in discussions related to containerization, virtualization, and even the realm of DevOps. Imagine a scenario where an issue emerges in the production environment, leading to a rollback. Subsequently, a tester or a DevOps professional approaches the developer seeking clarity, only to receive the response, "It works on my machine.”

How to better understand and anticipate issues as a developer:

- Build cross-domain knowledge

- Understand the operational context

- Communicate effectively

To gain hands-on experience and improve your work, you can try setting up a CI/CD pipeline or a Kubernetes cluster. This helps you make better decisions and optimize your workflows. It is easy for programmers to forget about the environment their code operates in, but immersing yourself in setting up these systems gives you a better understanding of how they work.

A Few Important Terms

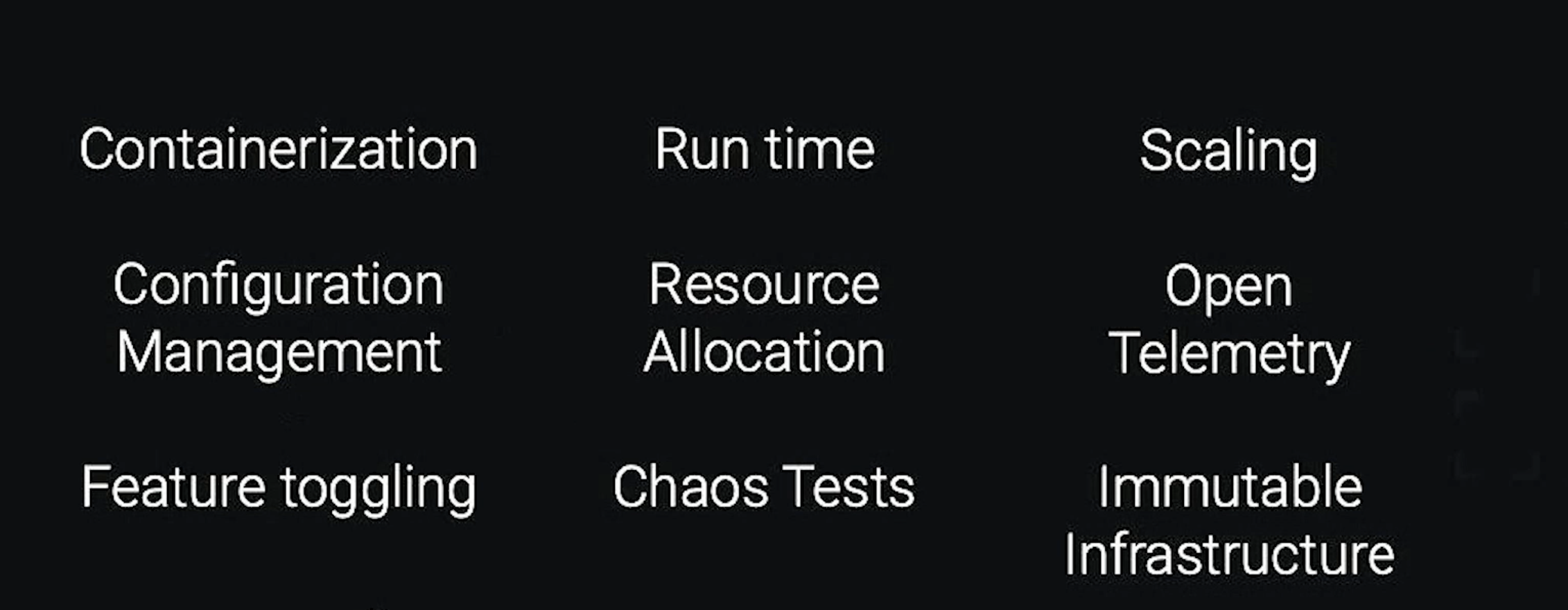

Containerization

Coming back to the meme. There's another version of this meme that says, "Then we'll ship your machine." That is, if it works on your machine, we will ship your machine. So what does containerization essentially entail? You take your code and your runtime, such as Node.js, for example. Node.js is not a language but rather a runtime with several different versions, each with a particular feature set. If a DevOps person asks how you run your program, your answer should not be to click the play button in the IDE but rather to use npm run start or npm run build, or whatever is specified in your package JSON. Additionally, you need to consider what environment variables need to be set for certain aspects of your application.

Configuration Management

This ****is also an important aspect of your application. Some aspects of your application may be configurable, and you have a couple of ways to do that, such as fetching it from a remote repository, instantiating it, or setting environment variables. Before running your application, consider what environment variables need to be set and if any particular connections or network ports need to be opened up. All of this comes under your execution context, which needs to be documented, especially if it involves any changes you made to the context during development.

Feature Toggling

Feature toggling is another important intersection of developer and DevOps. When your feature is being implemented, you need to plan for whether you want it toggled for everyone or only for certain users. This concept is called feature toggling or remote configuration management. The runtime configuration can be fetched from an external source, such as a database like DynamoDB or AWS app config.

Open Telemetry

This is an interesting field these days that provides insight into what is happening during runtime. It specifies the standard by which you can record and capture logs, as well as tracing.

Immutable Infrastructure

This goes along with the infrastructure from a code perspective. The same flavor or distribution of Linux or environment should be used in production, staging, testing, and development.

Chaos Testing

Pioneered by Netflix, chaos testing is essentially where you try to break things in your staging environment so that you have better visibility into what happens when something breaks in production, and you can plan and budget accordingly.

Scaling

This is an important consideration for your application. You need to determine whether your application is horizontally or vertically scalable and make design considerations to ensure that if another instance of your application comes in, it has its own identity and does not try to replicate database connections and that queuing is happening without running into race conditions.

Optimizations

As a developer, there are some important things to keep in mind. Firstly, it is crucial to note every change made to get your application up and running. In DevOps, the goal is to automate as much as possible. One effective way to achieve this is by setting up an automated build pipeline using tools such as Atlassian, AWS CodeBuild, Jenkins, or Argo CD.

The importance of replicability cannot be overstated. Without it, automation becomes difficult. Reproducing bugs is a good example. If a user or tester reports a bug without providing steps to replicate it, it becomes difficult to act on that information. Common issues, like possible race conditions that can break things due to changes, should be anticipated. For instance, if you are bringing up a cluster and the application comes online before the database container, you need to consider these edge conditions. Ignoring these issues is like waiting for a disaster to happen.

It is vital to anticipate potential issues and think about how your application or feature will run in different environments. Earlier, we discussed how to put together your runtime, configuration, environment variables, and other cloud-native components. Switching your perspective and understanding environments is key to successful development and deployment.

The Cloud-Native Way

Currently, we are heavily focused on microservices. Infrastructure as a service and software as a service are common paradigms and function as a service is gaining traction. Docker file is one of the most commonly adapted paradigms among these options. In a microservices model or if you want to run anything on Kubernetes, there are options like Knative. You can essentially run a VMware, but this accrues technical debt. Instead, if you write your application with a docker file and a docker-compose file, it will make your life and the lives of your operations people much easier down the line. This also ensures that your code can run on a vanilla Linux distribution.

Containerized dev environments can be a bit tricky. There are debates about whether to use Docker Compose or Kubernetes for dev environments. While it is possible to use lightweight distributions of Kubernetes, there are certain considerations for a dev environment that is containerized, particularly if your deployment is running there.

When it comes to scaling considerations, we already discussed how to manage configurations. If you are using HashiCorp or Kubernetes, you can have configuration within those platforms or use third-party solutions.

Summing Up

While it may seem overwhelming to bridge the gaps between developers and DevOps professionals, it is important to cultivate cross-domain knowledge and understand the operational context. Effective communication is key to optimizing workflows and anticipating potential issues. As developers, we can gain hands-on experience by setting up a CI/CD pipeline or a Kubernetes cluster and by immersing ourselves in the environment our code operates.

You can check out the complete discussion here ⬇️

Dive deep into our research and insights. In our articles and blogs, we explore topics on design, how it relates to development, and impact of various trends to businesses.