May 27, 2024

Implementing Speech-to-Text and Voice Command Recognition in Flutter: Enhancing User Interaction

Discover how to integrate speech-to-text in your Flutter apps, making them smarter and more fun. Learn how to transform spoken words into magic and explore exciting use cases.

Author

Book a call

Table of Contents

In a world where voice assistants are our daily companions and we can hardly remember the last time we typed out a full sentence, integrating speech-to-text functionality into your Flutter apps is not just cool — it is almost essential. Imagine chatting with your app as effortlessly as you do with your best friend. In this post, we are diving into the awesome world of speech-to-text in Flutter. Get ready to explore how you can make your app not just smarter, but also a lot more fun to use. Let us turn those spoken words into magic on your screen and discuss some of the coolest use cases that will blow your users' minds.

Why Speech-to-Text?

Speech-to-text technology allows users to interact with applications using their voice. This can be particularly useful in various scenarios:

- Hands-Free Interaction: Users can perform tasks without touching the screen.

- Accessibility: Improves accessibility for users with disabilities.

- Efficiency: Speeds up data entry and other interactions.

Step-by-Step Guide to Implementing Speech-to-Text in Flutter

Step 1: Setting Up Your Flutter Project

First, let us create a new Flutter project:

Next, add the speech_to_text dependency to your pubspec.yaml:

Run Flutter pub get to install the dependencies.

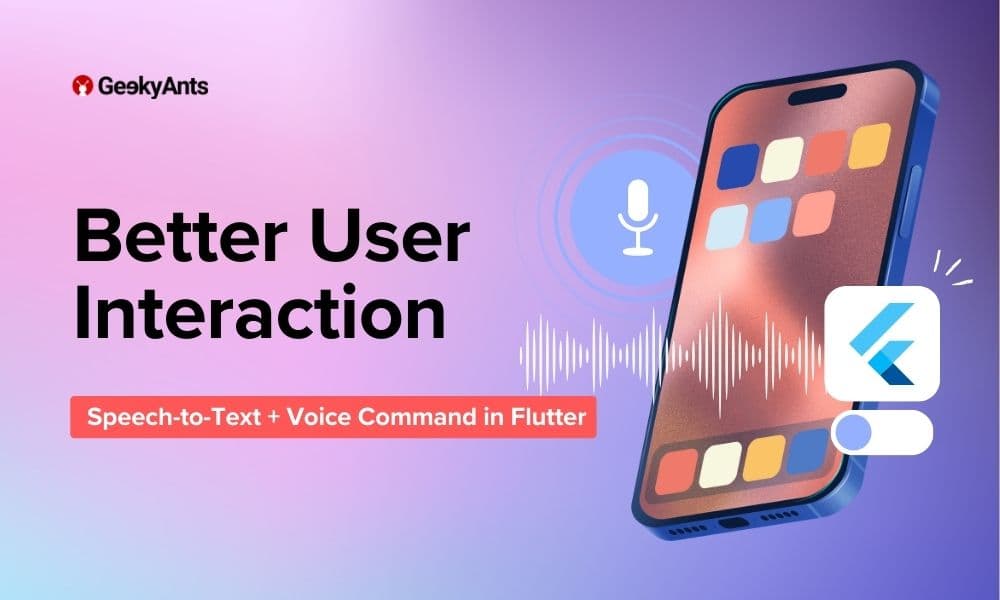

Step 2: Configuring Permissions

For Android, open android/app/src/main/AndroidManifest.xml and add the microphone permission:

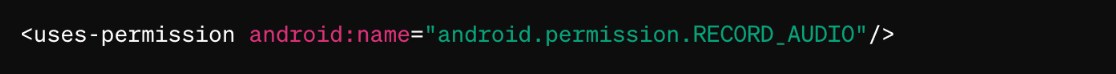

Next, find the following two lines:

Update these to the versions shown in the example below:

For iOS, you will need to grant permission to this component. Inside your Podfile, locate the line: flutter_additional_ios_build_settings(target) and below this add the following:

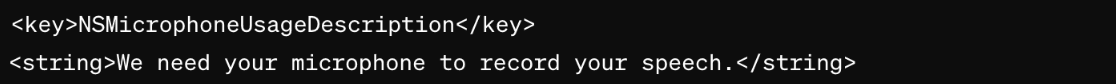

Open ios/Runner/Info.plist and add the microphone usage description:

Step 3: Implementing the Speech-to-Text Functionality

Let us dive into the implementation. Here is the complete code for the main.dart file:

Step 4: Testing Your Implementation

Run your app using a real device or emulator:

Ensure you grant microphone permissions when prompted. Press the button and start speaking. The recognized text should be displayed on the screen.

Enhancing Your App with Voice Commands

In the previous sections, we explored how to implement speech-to-text functionality in your Flutter app, which is pretty cool by itself. But why stop there? Let us take it up a notch and recognize specific voice commands to perform actions like opening Gmail, launching websites, or even searching on Google.

Here is how we can implement these voice commands in our Flutter app:

1. Add URL Launcher Dependency: To handle URL actions, we use the url_launcher package. Add it to your pubspec.yaml file:

2. Handle Commands in Code: Update your _performAction method to recognize and act on specific voice commands. Here is the updated method:

3. Integrate Command Recognition: Ensure your speech-to-text functionality integrates with command recognition seamlessly. Update your _onSpeechResult method:

4. Testing Your Implementation: Run your app and try out the commands:

- "Open Gmail"

- "Search cars"

- "Open YouTube"

Implementing Voice Commands

By recognizing specific phrases, we can make our app respond to commands like "open Gmail," "go to google.com," or "search for cars." This turns our app into an interactive assistant that responds to the user’s voice, enhancing the user experience significantly.

Use Cases for Speech-to-Text

Now that we have the basic functionality implemented, let us explore some use cases for speech-to-text in apps:

- Voice Commands: Enable users to control app functionality using voice commands. For example, navigating to different screens or triggering actions.

- Voice Input for Forms: Allow users to fill out forms using their voice. This can be particularly useful for accessibility.

- Voice-Activated Search: Implement voice search functionality in your app to provide a hands-free search experience.

- Voice Notes: Enable users to take voice notes, which can be transcribed and saved for later reference.

For a hands-on experience and to better understand the workflow, dive into the code. Feel free to explore the implementation via this GitHub repository.

Conclusion

By adding voice command recognition to your app, you make it not only interactive but also highly intuitive and user-friendly. This enhancement empowers users to control the app with their voice, making it accessible and convenient. Implementing speech-to-text in your Flutter app can significantly enhance the user experience by providing hands-free interaction. This technology is particularly useful in various scenarios, including accessibility, efficiency, and convenience.

By following the steps outlined above, you can easily integrate speech-to-text functionality into your app and start leveraging its benefits. Experiment with different use cases to see how speech-to-text and voice command recognition can enhance your app's functionality and user experience. So go ahead, implement these features, and watch your app become a smart assistant in the palm of your users' hands!

Subscribe to Our Newsletter

Subscribe to RSS

Press & Media Hub RSS FeedRelated Articles.

More from the engineering frontline.

Dive deep into our research and insights on design, development, and the impact of various trends to businesses.

Jun 8, 2026

Geeklego: The Open-Source Design System Built to Work With AI

Build AI-generated UIs without design drift. Explore Geeklego’s open-source design system, token editor, and AI-powered workflow layer.

Jun 3, 2026

How US Fintech Companies Are Modernizing Legacy Banking Systems Without Full Rebuilds

This blog covers how US banks are modernizing decades-old core systems without full rebuilds, and the fintech companies making that possible.

May 18, 2026

Your Vibe Code Has No Memory. DESIGN.md Fixes That.

A single Markdown file called DESIGN.md gives your AI agent the design memory it lacks — keeping your UI consistent across every session.

May 14, 2026

Building a Production-Ready Image Cropper in React Native

A practical guide to building a custom gesture-driven image cropper in React Native, with support for both profile and cover photo crops.

Apr 23, 2026

From Manual Testing to AI-Assisted Automation with Playwright Agents

This blog discusses the value of Playwright Agents in automating workflows. It provides a detailed description of setting up the system, as well as a breakdown of the Playwright Agent’s automation process.

Apr 14, 2026

The Keyboard Bounce of Death: Handling Inputs on Complex React Native Screens

Fix the React Native ‘Keyboard Bounce of Death.’ Learn why inputs jump and how to build smooth, production-ready forms with modern architecture.