Table of Contents

To Interface and Beyond: Re-imagine Command User Interface

Author

Subject Matter Expert

Date

Book a call

Introduction

Consider the following questions:

- With AI, can we simplify how users interact instead of adding another layer of AI-model on top?

- Can I directly chat with the back-end while secure data/API stays back?

- Could CUI (Command User Interface) be an option in a world where VR is considered for better Human-Computer interaction?

To answer these questions, we need to explore the following scenario:

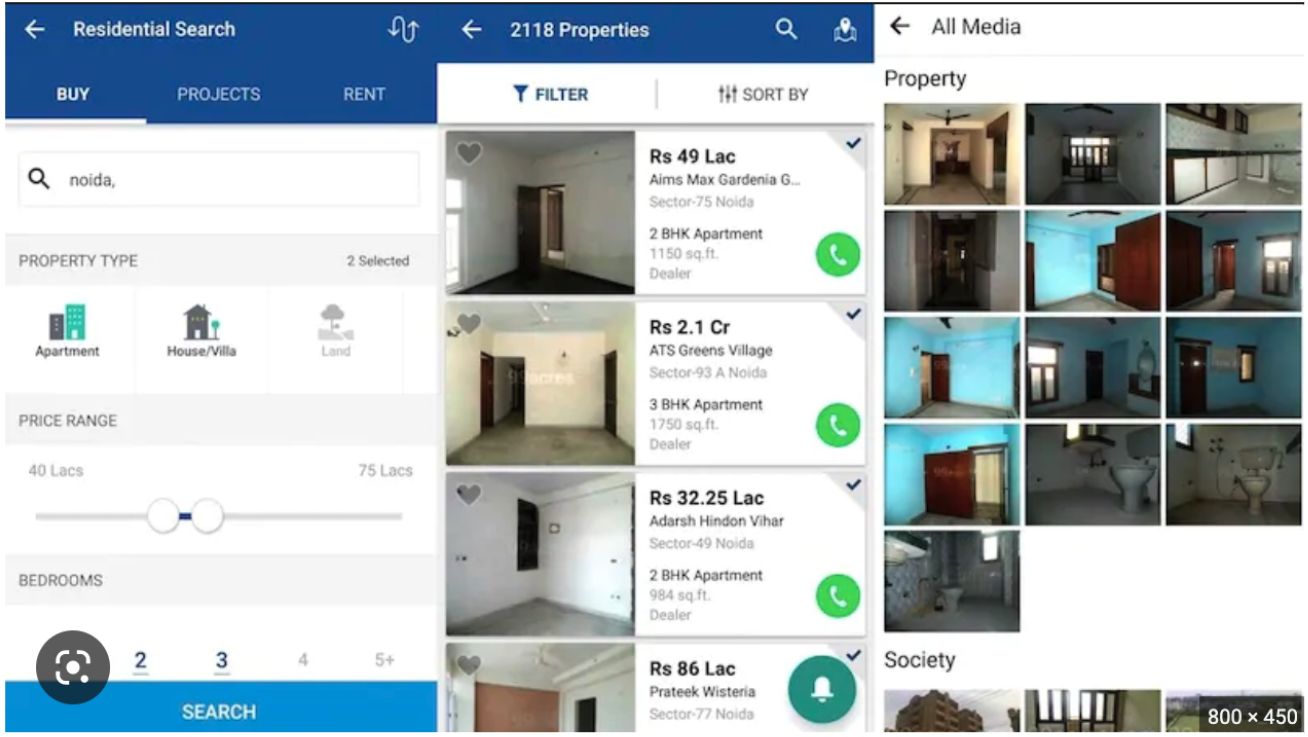

Suppose you need an apartment with two rooms. It is preferred that the room is spacious and above the ground floor but also not at the top, and that it is within walking distance from your place of commute.

Now imagine explaining this prompt to our existing applications. Currently, apps take in information related to some basic queries such as location, size, and price range — and these four or five filters produce thousands of results.

What about additional features such as public transport facilities, broadband coverage, proximity to schools and hospitals, pet-friendly areas, availability of parking spaces, and more?

Can you not tell the application your exact requirements?

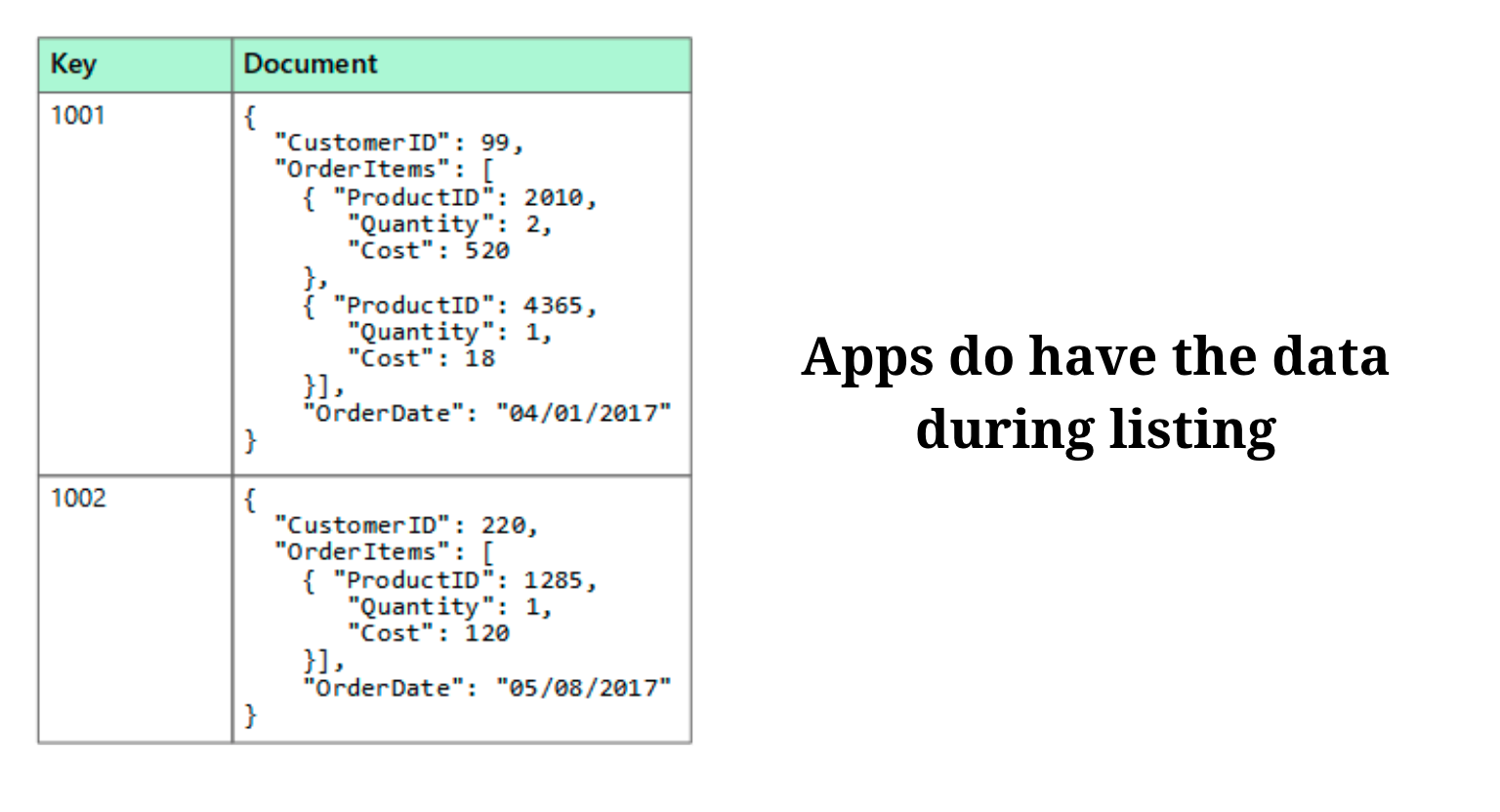

The back-end of applications does have this data provided by the owners during the listing.

But, we only use the search bar in the app to put in the location, and that is where an ocean of possibilities gets buried under an inadequate search query.

A Quest for Simpler Interaction

We need a more straightforward interaction and a simpler interface to get the exact information we want from the back end. In other words, we want one (or a few more) result(s) instead of 1,00,000 results.

Can we simplify layers of navigation?

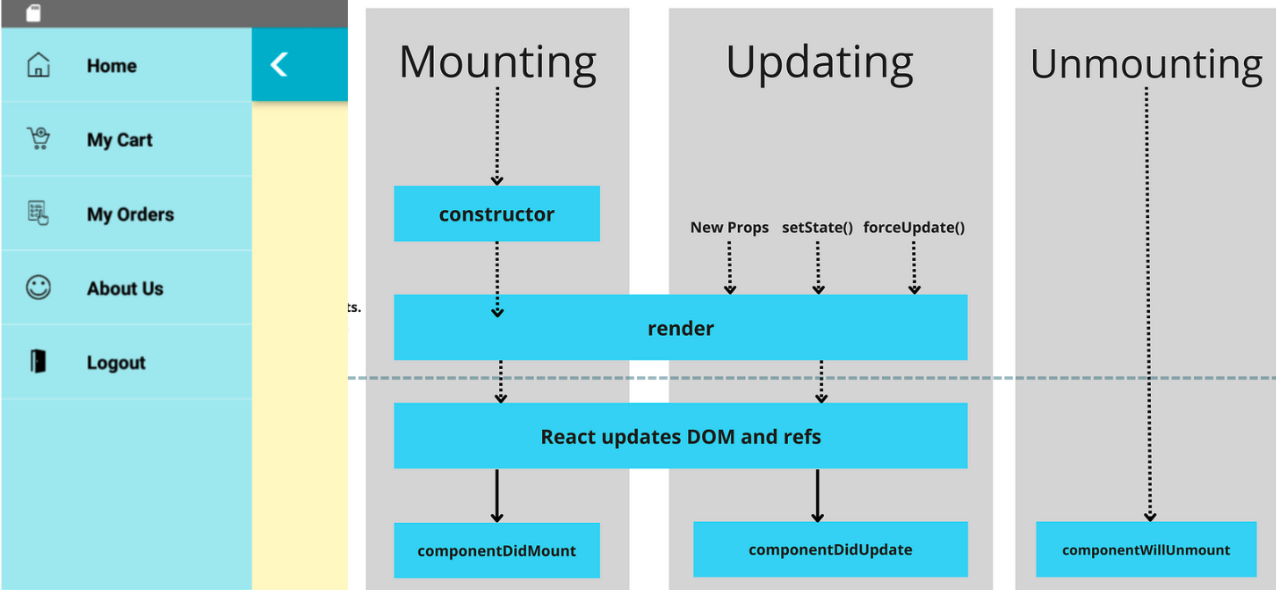

The image below shows the layers of navigation, dynamic and complex components, and dedicated bridge screens that work behind a current quick-service app.

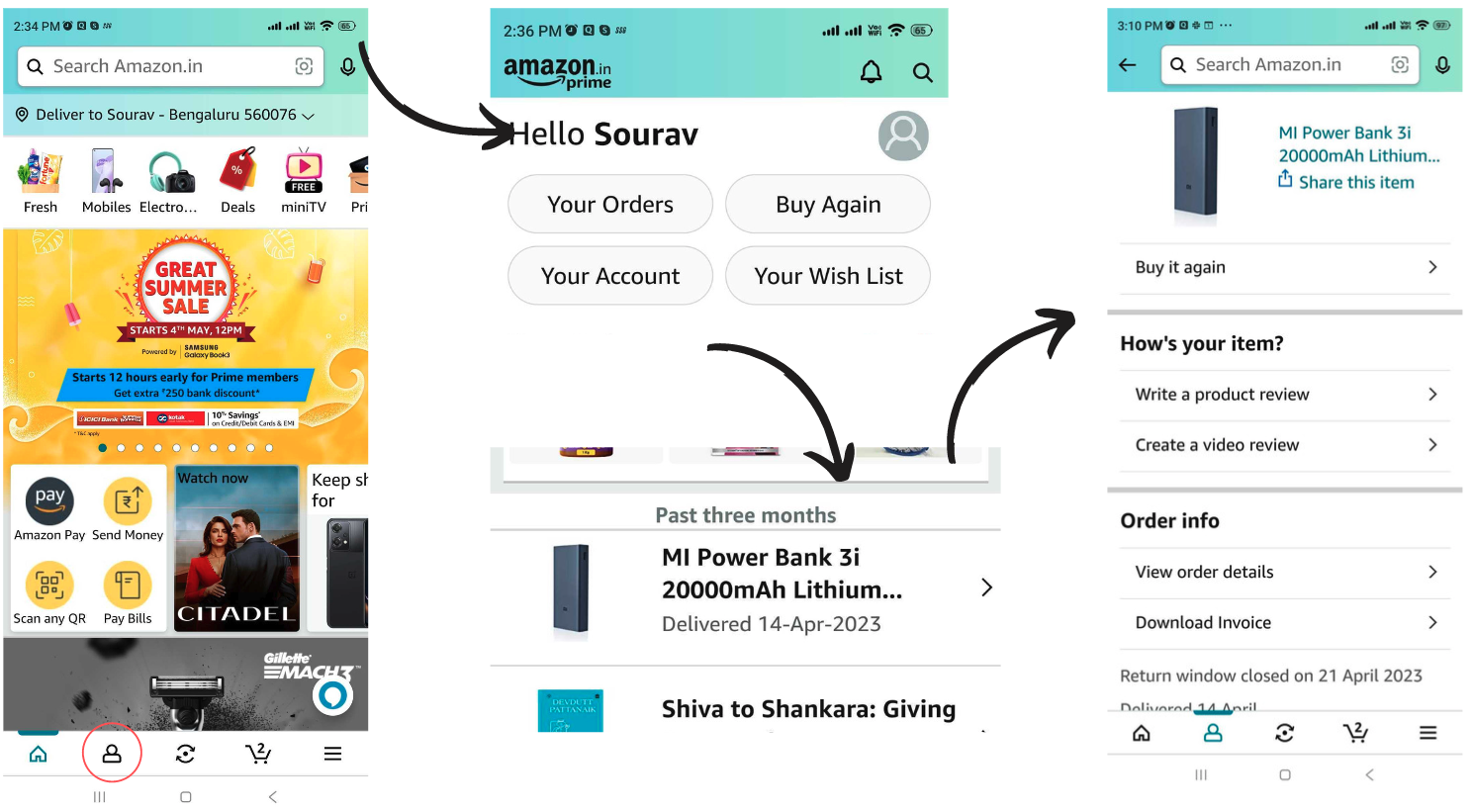

Let us take the example of Amazon. Suppose you are searching for your latest order. The process you will have to go through is to click Your Profile, go to Your Orders, get a list of almost three months of orders, and then get the details of your order.

Again, you must select further details about your order from a dropdown.

A Solution Inspired by the Terminal

Once you have used the terminal, you get used to the terminal.

Wouldn’t it be great if you could command an app to do a certain thing? This leads to the question—how to implement this to so many apps that are micro front-ends? How to create this into a chatbot? Are we talking about chatbots now?

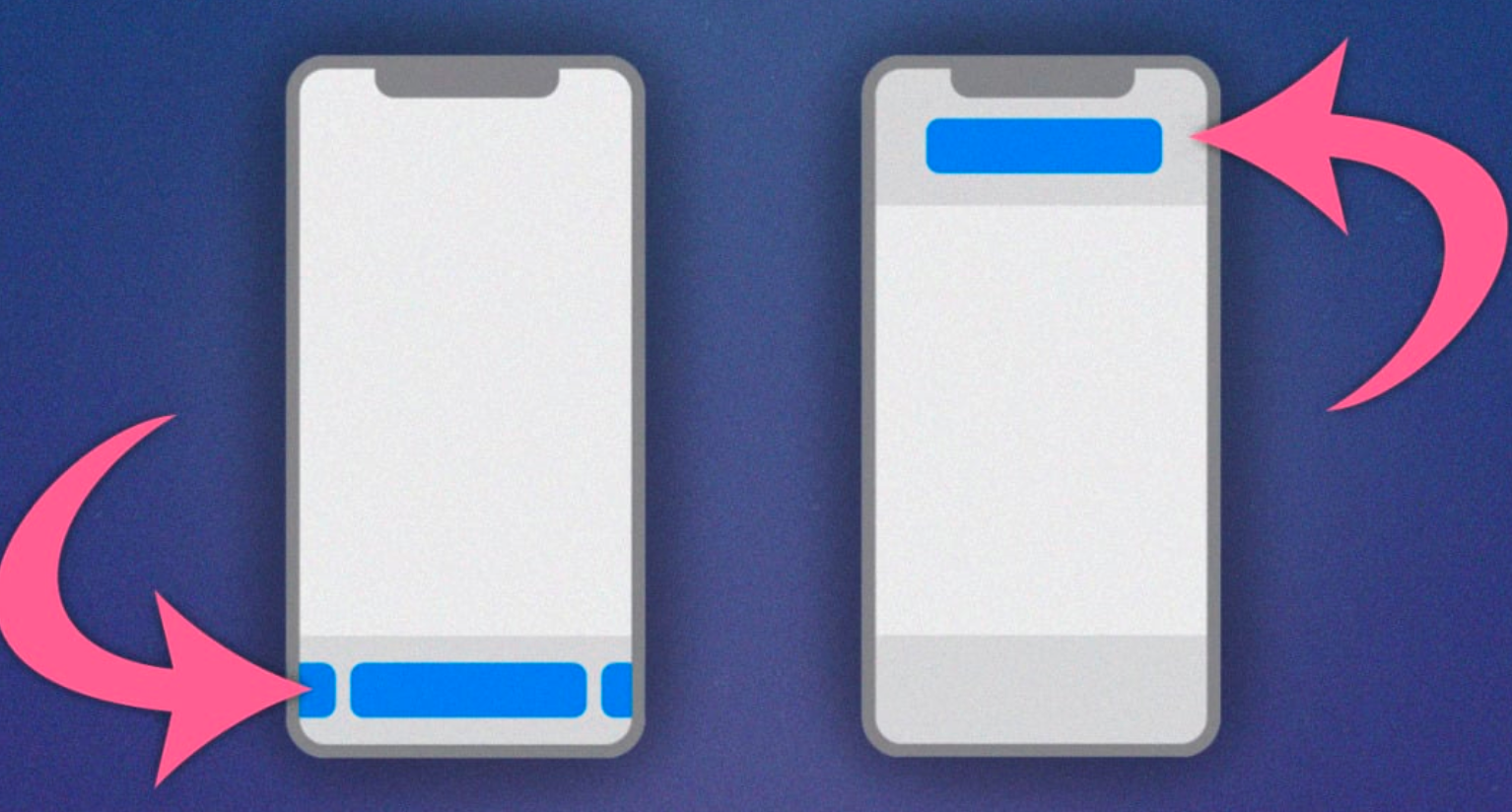

What about the search bar at the top of our apps? We only use it to search for a catalog that shows us many related things. What if the search bar is moved to the bottom, and your app front-end (the whole screen) acts as a space where you keep adding parameters to filter out your content? The result with the most proximity is thus closer to your thumb.

Note that this is not a new concept. Apple had pulled down the search bar in the iOS 15 Safari version.

Search is not a one-time thing. You can keep improving the queries again and again.

The idea here is not to create a different section in the app for chatbots but instead to use the search bar to search more than one word at a time — a search bar as a chat box to speak to the application.

When you apply something like this to ChatGPT, you can apply 4 to 5, or even more filters in your statement instead of selecting from a series of filters and MCQs. The assistance is not limited to filters.

Suppose you walk into a restaurant. Instead of searching just for a dish, you can search for more questions. You can ask for the day’s special menu, the chef’s recommendations, the ingredients in the dish, whether the dish is vegetarian, vegan, gluten-free, and so on. Now imagine doing this through the search bar on your app. Usually, we spend more time than necessary on our app searching for what we want to eat. And although we have over a thousand options on the app, we usually don’t explore many food options while ordering.

The Suggestions Do Not End Here

Think of an application like Blinkit. Here, searching for each product one by one takes about 20 minutes, and delivery takes 10 minutes. Instead of searching like this, can we ask for something more? We can ask it to create a party basket for a group of six, the average age is 23. Blinkit comes up with nine options to choose from. Or, we could ask it to create a monthly grocery basket based on previous orders. This is a great example of how AI-powered language models such as ChatGPT could be used to increase business.

This would not work for applications such as Facebook, Instagram, Snapchat, WhatsApp, Twitter, or Pinterest, where the business would want users to spend more time on the app. On quick service applications, on the other hand, you ask a question and get an answer rather than browsing through a catalog of products.

But this idea is very similar to what chatbots already do. And we have yet to see a lot of success in that field so far. However, organizations have by far created very simple and small applications. They have also yet to have a lot of investments or a wider scope of understanding of the surrounding environment.

As Sam Altman puts it in his talk, only a few companies will likely have the budget to build and manage Large Language Models (LLMs) like GPT-3, but many billion-dollar “layer two” companies will be built in the next decade. Companies like Salesforce and Air India have already invested heavily in GPT-powered chatbots and similar digital initiatives.

How Do These Applications Work?

It sounds like a great idea if we could talk about what we need and keep using our chat as the filter.

But how does this work?

Language models divide your statement into multiple paths. In this case, it creates the intent of the application, which is creating a basket of products for a specific occasion. Your model will know that the basket of products is a collection of items. And you have the time, which is tonight.

The recommendation model in the back-end decides products, based on different metrics, including previous user behavior, the performance of a product, and user-product interactions.

Intent and Entity

An intent represents the purpose or goal behind your query, while an inquiry is a specific piece of information within the query. Let us look at some examples:

Query: What is the weather in Bangalore? Intent: Weather inquiry

Entity: Location (Bangalore)

Query: Things to do in Bangalore

Intent: Recommendation inquiry

Entity: Location (Bangalore)

Query: What is the weather in Bangalore in Celsius?

Intent: Weather inquiry

Entity: Location (Bangalore), unit (Celsius)

Summing Up

As we speak of learning models, let us finish this article, the AI-way of learning. We discussed and learned how to resolve user requirements from the intent statement by the user. Let us do the unsupervised part of learning.

Suppose a super-app, like MakeMyTrip, provides solutions around the tourism sector. How many business solutions can we map, learning the user requirements from one statement?

Suppose you have a query:

I want to travel to Europe in June. What are some affordable destinations with good weather and cultural attractions?

The intent for this would be: Recommendation inquiry

The entities would be: Timeframe (June), destination type (Europe), budget (affordable), weather (good), and attractions (cultural)

Besides the basic - book our trains, buses, and hotel destinations- the end business can provide a plethora of guidance to improve the experience. Features such as tour booking and scheduling, restaurant recommendations based on our food choice and budget, budgeting tools, cultural information guide, luggage packing suggestions, emergency assistance, and travel insurance, and social networking for meeting other travelers.

There are endless possibilities.

This article summarizes Sourav Ganguly's talk at our recent React Native meetup held at GeekyAnts, Bangalore. You can check out the entire video below.

Dive deep into our research and insights. In our articles and blogs, we explore topics on design, how it relates to development, and impact of various trends to businesses.